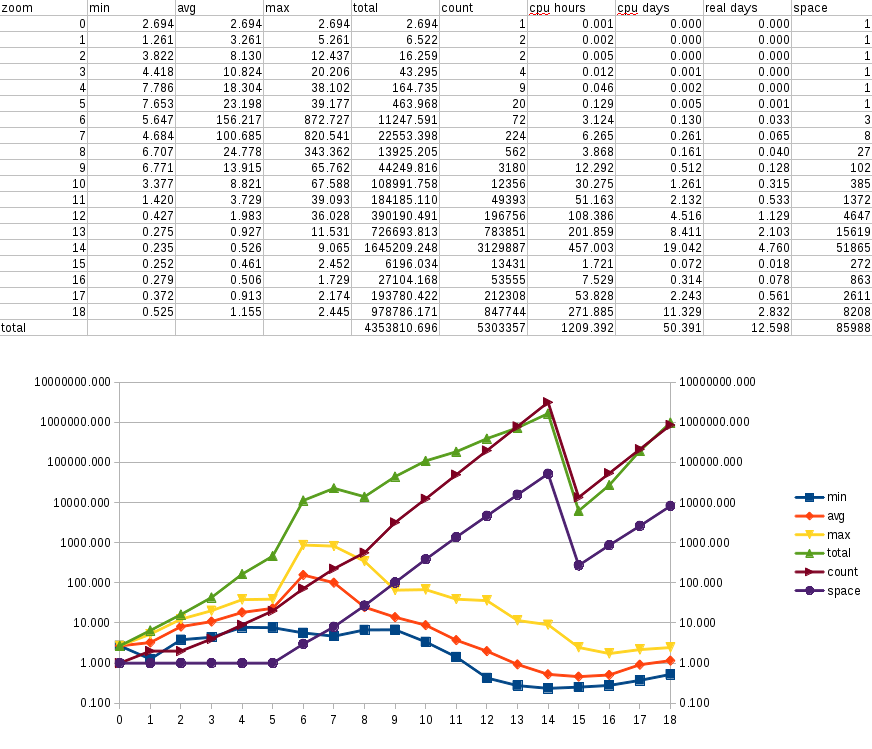

Detailed OSM mapnik redering times

In the last post I concluded that I will reimport Europe's data before applying a new version of my design. This would also mean that I will have to render everything from time to time. How much does this take? Let's answer that question.

Remember first that I didn't quite import all of Europe, just a rectangle with North UK, Portugal, Malta and Istambul as limits, so I'll just render that part. Then comes the question of how deep do it. OSM's wiki has some information about tile usage that shows that only up to zoom level (ZL) 11 all the tiles were viewed at least once, and that after ZL 15 the percentage drops below 10. I strike my balance at 14, where there's a one in three chance of the tile being seen. Then I render specific regions down to ZL 18, mostly my current immediate area of influence and places I will visit.

So with the same bbox as the import step, I fired the rendering process and left

the machine mostly by itself. I say mostly because at the end, it's my main

machine, and I kept using it for hacking, mail, browsing, chat, etc, but nothing

very CPU, RAM or disk intensive. I modified generate_tiles.py so it measures

the time spent on each tile and logged that into a file. Then I run another

small python script on it to get the minimum, average, maximum and total times,

how many tiles were rendered and the space they take1. Here's a table and graph

that resumes it all:

The scale for all the lines is logarithmic, so all those linear progressions there are actually exponential. You can also see the cut at zoom level 14, and that I ended rendering more or less as many tiles from ZLs 15 to 18 as for ZLs 9 to 13.

The first thing to notice is that the average curve is similar, but considerably

lower, to the one I had in

my minimal benchmark.

Most important, and something I didn't think then, is the total time columns.

I have 4 of them: 'total' is the total CPU time in seconds, then I have one for

the same time in hours and and another in days. As I actually have 4 CPUs and I

run generate_tiles.py on all of them, I have an extra column that show an

approximative wall clock time. At the bottom row you can see totals for the

times, tile count and space used.

Talking about space, There's another constraint there. See, I have a 64GiB Nokia N9, which is one of, if not the, intended purposes for this map. Those 64GiB must be shared mostly with music, so I don't get bored while driving (specially in the endemic traffic jams in this region). This means that having up to ZL 14 in my phone is unfeasible, but ZL 11 seems more reasonable. This means that I will also have to make sure I have what I consider important info at least at that ZL, so it also impacts the map design.

So, 12.6 days and 84GiB later, I have my map. Cutting down ZLs 12 to 14 should save me some 8 days of rendering, most probably 7. I could also try some of the rendering optimizations I have found so far (in no particular order):

https://github.com/mapnik/mapnik/wiki/OptimizeRenderingWithPostGIS

https://github.com/mapnik/mapnik/wiki/Postgis-async

http://mike.teczno.com/notes/mapnik.html

http://blog.cartodb.com/post/20163722809/speeding-up-tiles-rendering

I was also thinking on doing depth first rendering, so requests done for ZL N can be used to render ZL N+1 (or maybe the other way around). This seems like a non obvious path, so I will probably check if I have lots of time, which I currently don't.

-

Actually, the space used is calculated by

du -sm *, so it's in MiB. ↩